Most people currently interact with AI through single conversations: opening a chatbot, asking a question, generating a draft, or seeking coding help. While this approach can be powerful and even transformative, it is becoming limited. The real shift is not just about smarter assistants, but about fundamentally changing the user’s role.

By 2027, the most valuable workplace skill may be managing a coordinated team of specialized agents that operate, collaborate, and make decisions on your behalf, rather than simply prompting a single AI effectively.

This represents a significant shift. Traditionally, software has functioned as a deterministic toolset: you click, it executes. Agent systems reverse this model. You define objectives, set constraints, and specify success criteria, while the system determines how to achieve them. In this environment, the key skill becomes designing systems of delegation, not just using software.

The transition from copilots to agents is not only a technical advancement; it is also a managerial one.

The workplace is already moving in this direction

This shift is not speculative; evidence of it is already visible across software, enterprise tools, and development environments.

As noted by IDC and other industry researchers, by 2026, 80% of all enterprise workplace applications will contain AI agents. That figure points to something bigger than feature growth. It signals a structural shift in which AI is becoming an operational layer built into how work gets done.

However, adoption remains uneven. Recent research indicates that only 11% of organizations currently use AI agents in production, while another 38% are actively working toward implementation. This gap highlights that, although the market recognizes the strategic value of AI agents, many organizations struggle to transition from experimentation to deployment.

The primary challenge is not building a single impressive agent, but integrating multiple agents across platforms, ensuring accountability, and managing what many teams now call “agent sprawl”: fragmented, loosely connected systems that are difficult to manage, audit, or optimize.

From single assistants to agent teams

Understanding the next phase requires examining how interaction models have evolved. In 2023, most users engaged with AI through chat interfaces designed for question-and-answer tasks. By 2024, copilots became integrated into productivity tools, suggesting code, drafting documents, and supporting workflows within familiar software.

In 2025 and 2026, the model is shifting again. AI systems are moving beyond simple prompts to execute multi-step workflows, make conditional decisions, and coordinate with other agents to achieve broader objectives.

Microsoft corporate vice president Charles Lamanna noted, “Copilot was Chapter One; Agents are Chapter Two.” This distinction is important. Copilots are reactive, waiting for human input. Agents are proactive; they interpret goals, break them into tasks, and act with less human intervention. This marks the point where AI shifts from a tool on the screen to an integral part of the workplace infrastructure.

Market trends reflect this shift. The AI agent market is projected to grow from approximately $7.84 billion in 2025 to over $52.62 billion by 2030. This growth signifies not only increased investment, but also a fundamental change in how organizations structure work, moving from isolated AI assistance to coordinated agent ecosystems.

What managing AI agents actually looks like

In practice, managing agents involves designing a network of micro-specialists rather than deploying a single, general system. Effective teams break workflows into smaller, discrete functions and assign each to a specific agent, rather than attempting to create one agent for all tasks.

This approach makes systems easier to scale, debug, and improve over time.

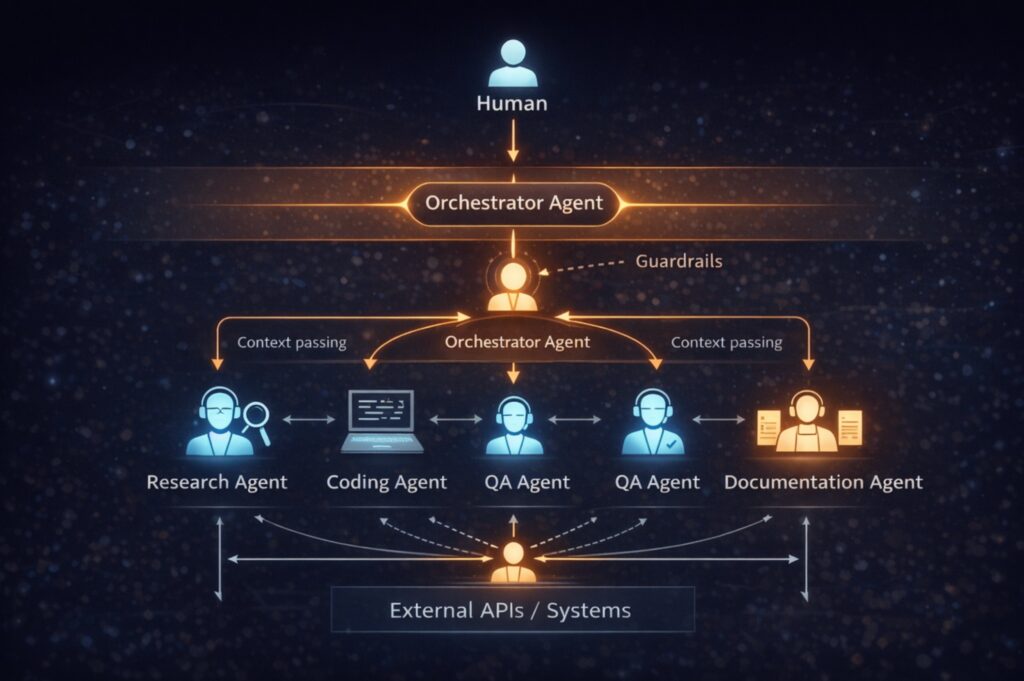

For example, a product manager scoping a new onboarding flow might provide an objective to an orchestrator agent: validate and scope a new onboarding process to improve conversions. A research agent reviews support tickets and customer feedback, a market agent benchmarks competitors, and a data agent gathers internal analytics. The orchestrator compiles this information into a draft product specification, identifies gaps, highlights uncertainties, and assigns follow-up tasks.

The human role evolves rather than disappears. Responsibilities shift to reviewing trade-offs, resolving ambiguities, and making judgment calls. The work is streamlined, not eliminated, and value moves from producing initial drafts to making informed decisions.

This shift is also evident in software engineering. In modern development environments, a research agent gathers context and generates technical specifications, a coding agent such as Claude Code implements features, a QA agent tests the work and identifies edge cases, and a documentation agent updates references. An orchestrator agent oversees task routing, manages dependencies, maintains shared context, and bridges human instruction with autonomous execution.

Many of these systems rely on shared state stores or memory layers. Agents read and write to structured context, often using vector databases for semantic retrieval, task graphs for dependency tracking, and event-based triggers for downstream actions. Without shared memory architecture, agents may duplicate work or produce inconsistent outputs.

Coordination, rather than raw model sophistication, is most critical.

Why coordination is the real challenge

This coordination challenge is a primary reason multi-agent systems often fail in production.

Agents do not inherently share understanding as high-performing human teams do. They require structured context, clear task ownership boundaries, and defined protocols for handoffs, stopping, and escalation.

As a result, production-ready agent systems are characterized less by impressive demonstrations and more by disciplined architecture: structured communication protocols, evaluation pipelines at each stage, and clear constraints on autonomy. Low-risk tasks can often be fully automated, while high-risk actions, such as modifying production systems, still require human approval.

A mature system defines expected outputs, confidence measures, and procedures for handling unsatisfactory results. It specifies whether the system should retry, escalate, or reroute issues. This level of structure distinguishes experimentation from deployment.

The interoperability bottleneck

If coordination is challenging within a single technology stack, it is even more difficult across multiple vendors.

Interoperability is currently the most significant technical obstacle in multi-agent deployments. Organizations often manage a patchwork of tools: a coding agent may run on Claude Code, a research agent may use LangChain, and a QA agent may be built with Microsoft AutoGen. Without common standards, each integration becomes a custom project.

Three protocols emerged in 2025 to solve that problem.

Model Context Protocol (MCP), developed by Anthropic, defines how agents use tools and share context across systems.

Agent2Agent (A2A)—which Google launched this protocol—focuses on direct agent-to-agent communication. It was introduced with more than 50 partners, including Atlassian, Box, Salesforce, ServiceNow, and PayPal. The Linux Foundation later launched the Agent2Agent Protocol Project to help govern its development.

NANDA, MIT’s protocol acts as a decentralized discovery layer for AI agents. As Forbes noted, “they’re making TCP/IP for AI, and it’s called NANDA.”

Microsoft has integrated A2A into Azure AI Foundry and Copilot Studio. This development suggests a future where organizations can combine agents from different vendors without building each integration from scratch. Agents will increasingly function as services within a broader software architecture, rather than as isolated tools.

The frameworks that will shape deployment

The tooling landscape is dividing into two categories: code-first frameworks for technical teams and low-code platforms for broader adoption.

On the code-first side, LangGraph provides graph-based workflows with state persistence and support for cycles and conditionals. By the end of 2025, an estimated 600 to 800 companies were using it in production. CrewAI takes a role-based approach, assigning agents specific responsibilities. Microsoft’s own Agent Framework brings together AutoGen and Semantic Kernel into a more unified offering.

On the low-code side, Langflow offers open-source drag-and-drop tools for multi-agent systems, while Botpress focuses on modular conversational agents.

In production, modular specialization outperforms monolithic intelligence. Micro-specialists consistently deliver better results than a single, general-purpose agent. The orchestrator manages state, dependencies, and handoffs, serving as the system’s nervous system.

For many organizations, a hybrid approach is most practical: quickly prototype in low-code tools, then migrate successful systems to code-first environments for greater control, scalability, and observability.

The governance problem is still wide open

Even when the technology functions effectively, governance often lags behind.

When an AI agent mishandles sensitive customer data, who is accountable? Who reviews the decision trail? Who decides whether the agent should have had that level of autonomy in the first place?

This accountability gap remains a major barrier to broader adoption, as identified by 74% of organizations in Deloitte’s 2025 findings.

Many companies mistakenly believe that improved models will resolve these issues. However, most production failures stem from system design flaws, such as vague objectives, overlapping responsibilities, poor observability, weak feedback loops, or insufficient constraints, rather than model shortcomings.

Security is also a concern. Multi-agent systems introduce risks such as prompt injection in agent-to-agent communication, API key exposure, and unauthorized actions when agents have excessive autonomy.

Leading organizations are beginning to treat agents as digital workers rather than disposable tools. They track agent activities, system interactions, and decisions. This convergence of IT controls and workforce management indicates the direction of future practices.

What changes by 2027

By 2027, the shift from the single-copilot model will likely be clear. Managing multiple specialized AI agents may become as routine as managing email, calendars, or shared documents.

Organizations that adapt most quickly will not wait for perfect autonomy. Instead, they will begin with clear, repeatable workflows—such as reporting, internal research, onboarding, QA, and support triage—and learn to orchestrate agents safely at a small scale.

In this environment, the most successful employees will not be those who produce the most individual output, but those who design, manage, and improve systems of delegated machine work. They will function as team leads for AI ecosystems, regardless of formal titles.

This creates a new form of leverage. One individual with a well-structured agent system may generate as much output as several people working independently. This shift not only changes productivity but also influences how organizations approach hiring, performance, and definitions of high-performing work.

The next phase of AI in the workplace is not solely about smarter software. It introduces a new management layer, where human value increasingly derives from orchestration, judgment, and system design.

This future is arriving more quickly than many organizations are prepared for.