Artificial Intelligence (AI) has been advancing at a rapid pace however, one of the major issues that persists is developing a strong deployment system that harnesses cutting-edge AI research. This evolution always involves complex workflows covering aims for research and engineering as well as scalability. To understand how DevOps methodologies are changing this field, we had a conversation with a Software Engineer – Supriya Namana.

1. Supriya, while answering the question, let us know how you see the role of DevOps in AI going forward?

Traditionally, and I mean quite a while ago, AI and machine learning were treated in a separate manner from engineering functions. As it is with siloed systems, researchers worked purely on innovation without necessarily taking into account the integration of models into the systems by the production teams who were challenged by such friction parameters where deployment was really hard, or the predicted and beneficial performance for the models never materialised in practical application.

DevOps is resolving this issue by providing automation, standardisation, and collaboration. Nowadays, CI/CD pipelines are not appropriate only for software engineering, but they are also essential to AI processes. They take care of almost everything ranging from data preprocessing, model training to model testing and deployment. For instance, MAANG companies already have adopted the practice of having pipelines that treat data sources, models, and code throughout their lifecycle as first-class entities. These pipelines provide standardisation in different environments, take care of mundane operations and deliver extensibility. In addition, the feedback mechanisms enabled by these systems help the teams to tackle problems such as data drift or performance degradation propagation in a timely manner. This strategy makes it easy to deploy models by minimising the steps between research and deployment which makes the model validation periods shorter. Merging the engineering depth with DevOps style makes the AI development processes faster, more robust, and more effective.

2. What are some of the critical aspects of the deployment of AI research work?

A2: The deployment of AI research work suffers from deployment challenges, and those are the following:

Data Drift: There is a pernicious imbalance between models fixed on datasets and real-life data which is incredibly volatile. Without the capacity to incorporate new patterns and anomalies found in the actual environment, these models are doomed to fail.

Scalability: While certain AI models operate effectively in contained environments, they still necessitate work to be sufficiently adapted to meet multiple requirements in real time contexts and this includes ensuring there is low latency for complex computations such as the TPUs and GPUs.

Reproducibility: With crossed arms, this barrier emblematizes the consequences of lacking reproducibility ensuring that any model used in this type of research could successfully be replicated in industry along with the original intended output despite having the hardware or dependencies aver different alongside each other, which is worthy of consideration and granularity, not just code alongside configurations for the model artifacts but also of a dataset.

Cross-Functional Communication: Slower development can result from these values not aligning, the being word and objectives of researchers versus those of the production teams who seek the complete opposite where researchers focus more on model correctness and the deployment teams latencies. This gap does need to be filled nonetheless and with clear strategies and frameworks.

DevOps methods respond to these challenges through realisation of persistent control, versioning of either datasets or models, and improving inter-team communication. For instance, version control tools such as MLflow enable the sequencing and versioning of experimental tracking, and Prometheus in combination with Grafana offers real time oversight that identifies and addresses data drift early. Moreover, Kubernetes can be used for orchestrating scalable deployments where models are able to adapt to changing workloads. Integrating these practices into AI lifecycle further ensures that taken systems will be able to seamlessly transition from research to production to enable maintenance of high availability in the presence of external strain.

3. Do you have any DevOps-related case study that helps to increase AI-related productivity?

Of course. Broadly speaking, we’ve been working to integrate a complex recommender system to a large scale international e-commerce platform. While the research team had developed a cutting edge model and great results in a controlled offline setting, there were many engineering challenges to overcome in order to support the required functionalities in a production environment. Realize the engineering complexity of the model which had to cater for real-time inference and heavier optimizations and for that, it had to have scalability.

We used fusion devops techniques in order to facilitate this process beginning from building automation tools for the pipelines for model retraining, validation, and deployment. Such tools as Kubeflow permitted us to assure that those tasks were performed in a consistent manner and were repeatable – leading to lower chances of mistakes in the tasks performed, hence the role of transition from dev to prod became shorter. Transitioning into using Kubernetes clusters also allowed for an elastic scaling of the inference side of the system and would cater for any variances to the traffic into the platform.

In conjunction with monitoring, we were able to maximize reliability of the system even further. With the usage of tools such as Prometheus and Grafana, we were able to implement powerful mechanisms that are capable of monitoring metrics, finding anomalies in almost real-time, and conducting resource allocation. Such a comprehensive monitoring environment enabled us not only to step back and watch latency and resource utilisation but also to be proactive and identify potential bottlenecks and fix them with great foresight.

The project has emphasised the role of DevOps in addressing the mismatch that exists between AI research and AI production. We invested in using automation together with additional tools that allow for constant monitoring, through this we were able to deploy the recommendation system in a highly technical resource demanding aspect. It showcased how an evolution oriented approach embedded with DevOps culture could efficiently meet the speed and user friendly nature of AI systems which regardless of being sophisticated could create value for the end users.

4. What do you think is the main difference between CI/CD for AI pipeness versus CI/CD for regular software pipelines?

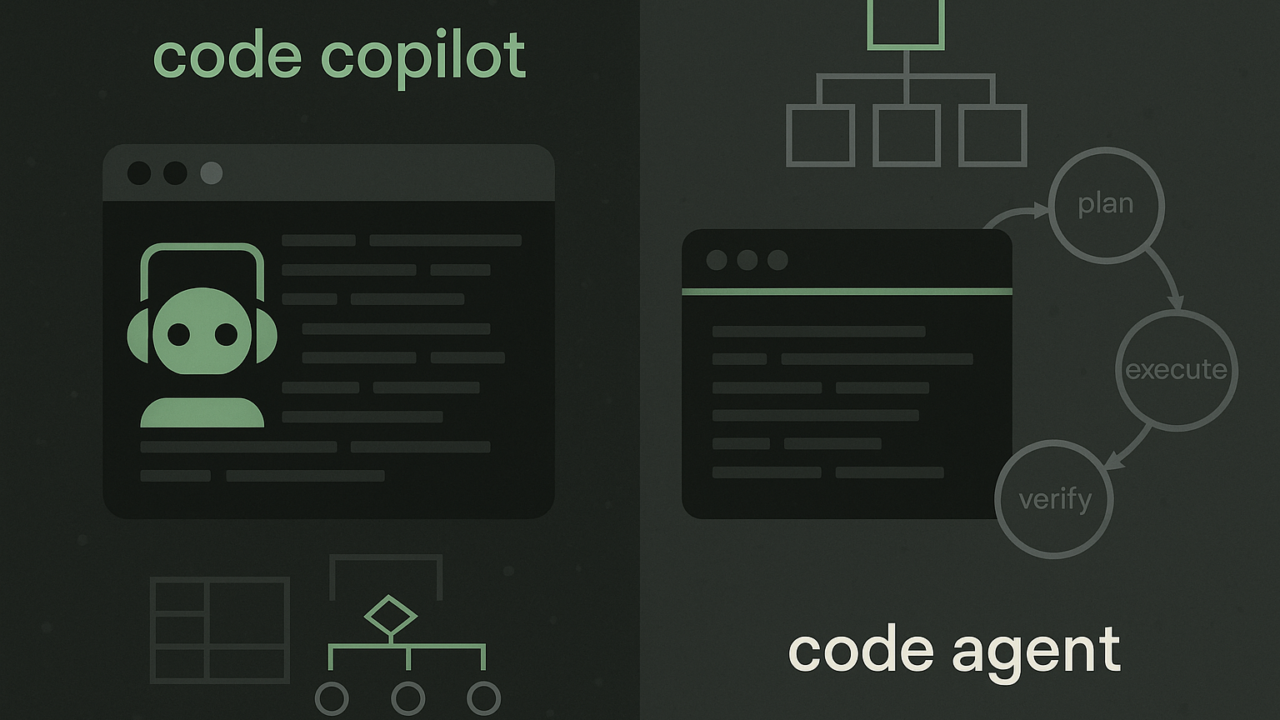

In contrast to the CI/CD AI model which works more on code alone, the CI/CD AI model requires pipelines to incorporate data and models as key entities. The differences include the following elements:

- Data Validation: Automated approaches to data validation such as checks for data quality and drift should complement unit and integration tests where relevant.

- Model Testing: In order to determine whether the models have achieved the required accuracy and latency the pipelines have to include performance testing.

- Versioning: To enable reproducibility it is important to version the datasets, the models, and the codes.

- Compute Requirements: Use of cumbersome tools such as GPUS and TPUS is often required in training and testing processes making resource allocation crucial.

We have designed hybrid pipelines that merge Jenkins with TFX to facilitate resource allocation for data, models, and code. These are adaptable pipelines as they are able to cope with evolving datasets and model needs while maintaining reliability and high efficiency.

5. Which instruments and frameworks are significant in the incorporation of DevOps into AI operations?

Depending on the organisation’s AI strategy below tools are necessary to incorporate DevOps into AI:

- Kubernetes: For scaling model inference and training across distributed environments. It enables the automatic allocation of resources to different workloads to ensure that they always work at optimum power.

- MLflow: For managing the lifecycle of machine learning models, from tracking experiments and tuning hyperparameters to deployment. Its ability to version datasets and models means that reproducibility, a key pillar of solid AI systems, can be achieved

- Apache Airflow: For orchestrating complex workflows like preprocessing, feature engineering and retraining of the models. It’s architecture allows for a simpler and modular way of managing tasks that need to depend on one another and scaling as required.

- Prometheus/Grafana: For tracking the health of the systems and the effectiveness of the models in use to ensure they work well in production. These tools help teams identify and resolve issues such latency spikes or accuracy losses during production in real time.

- GitOps: Guarantees that the infrastructure and configurations are maintained in versions and audits so as to lower chances of configuration drift and ease errors recovery.

Each tool has a different function, with their simultaneous use being the pillar of modern AI DevOps workflows. For instance, Kubernetes does an impressive job of scaling inference by horizontally distributing traffic across multiple nodes which is a necessity for any real time application. As for MLflow, it alleviates the problem of tracking experiments and deployment pipelines which encompasses both the researchers and the engineers within a single operational space. Scheduling and orchestration offered by Apache Airflow enables the automation of repetitive tasks within teams, thereby enabling those teams to devote resources to more productive pursuits.

Of late, the more proactive approach in operations has been aided by both the Prometheus and Grafana, availing the continuous monitoring and visualisation of defined metrics and conditions that most models will need to adapt to. GitOps complements these DevSecOps novel methods by adding the trust factor to businesses in terms of timeliness, availability, and consistency.

While these tools are packaged as a single devops AI tool, their unification enables teams to build AI systems which are not only extensible and computationally efficient but are also robust against changing conditions. Such an approach combines all the best parts together in one place and guarantees that the cycles of iteration are efficient and reduces the time required to release new products and encourages inventiveness.

6. What best practices would you recommend for teams looking to improve AI deployment?

There are certain guidelines that should be adopted by the teams in order to improve AI deployment, such as:

Interdisciplinary Approach: Encourage participation from researchers, engineers, and DevOps practitioners in order to eliminate silos. Collaboration results in a greater comprehension of collective objectives and promotes knowledge sharing, decreasing miscommunications and inefficiencies. Transitioning from researching to implementing in production smoothly can be ensured by setting up multi discipline teams with clear responsibilities.

Everything that Can Be Automated Should be: At the heart of the effective deployment of AI tools is the ability to do automation. Preprocessing of data, model training, testing and deployment can all be automated, which eliminates the likelihood of human error, mitigates time and guarantees repeatability. Each step of the AI lifecycle can be made more efficient by using UML tools and Apache Airflow as well as MLflow, which aid in ensuring reproducibility and traceability.

Always Monitor Performance: AI systems already in use in production require regular and constant maintenance. Technologies such as Prometheus, Grafana were designed to compute essential parameters such as model drift, latency or the amount of resources consumed. Systems that continuously monitor should also incorporate alert systems that are configured to eliminate alert fatigue yet respond to important situations.

Go Slow: Try validating models and workflows on small-scale; go to production level after thorough testing of the scale. This would aid in getting sore points during production identified and fixed early, reducing the risks when moving to increased scale. Actual deployments are also good for gaining the information on how well the model or the system would work.

Feedback Loops: Feedback from the production environments should be collected and analyzed and mechanisms for that designed. Feedback loops, such as the user’s MO or outcome of his decision, can be data which would aid further improvements of the model or the workflow of the models. All the sets of information are incorporated into the research for the improvement of the process.

Mitigate Pitfalls: Avoid overwhelming problems such as dealing with edge cases during data cleaning or ensuring the system is able to sustain itself during high load periods. Rather than bombarding the team with monitoring alerts, focus on real time insights, and make use of anomaly detection systems in addressing major causes of the issue.

With these practices in place, companies can bring growth to the environment for AI solutions and ensure that silos are eliminated , automation is used and continuous improvement is implemented. In addition to friction reduction, the new strategies focus on improving the scalability and reliability of the services. The combination between the research and the DevOps side will be crucial for this as AI technology is being more developed and available. With this set of practices in place organizations can deal with the AI development lifecycle and provide the needed solutions.