Artificial intelligence is often promoted as a way to do things faster—writing, sorting, diagnosing, searching, optimizing, and even making decisions. But the real ethical debate is not about whether machines can do more, but whether they should. This question comes up well before we reach science fiction scenarios.

It appears as soon as AI affects a hiring decision, a student’s grade, a patient’s treatment, a loan approval, a police investigation, a child’s learning, or even someone’s mood online. At that point, “ethical AI” is no longer just a slogan; it becomes a real test of restraint.

The phrase itself can sound reassuring, maybe even too much so. It implies that with enough guardrails, fairness checks, documentation, and oversight, AI can be made safe from the start. There is some truth to this, but it is only part of the story.

UNESCO’s Recommendation on the Ethics of Artificial Intelligence puts the protection of human rights and dignity at the heart of AI governance and highlights principles like transparency, fairness, and human oversight.

NIST’s AI Risk Management Framework makes a similar point in more practical terms, saying that understanding and managing AI risks is key to building trust.

These two views are important because they show that ethics is not just about public image, but about setting limits on power.

The real meaning of “Ethical AI”

Ethical AI is not just about avoiding obvious harm. It means knowing what should not be automated, what should not be guessed, and what should not be optimized without consent, options for appeal, or human control. UNESCO’s standard is helpful here because it does not see ethics as just a technical issue.

It connects AI to human rights, dignity, fairness, environmental impact, and the risk of making existing inequalities worse.

NIST’s framework also sees AI risk as more than just cybersecurity or accuracy. It asks organizations to consider context, trade-offs, and potential or unexpected negative and positive impacts.

This mix is important. A system can be efficient, profitable, and technically impressive, but still be ethically wrong for its use.

This is why the debate is not as simple as “good AI” versus “bad AI.” Many tough cases involve systems that work well in some ways but have serious moral costs in others. For example, an AI model might spot likely fraud faster than people, but it could also wrongly flag innocent people if the data is biased.

A generative system might help doctors summarize notes, but it could also make up medical details if used carelessly.

NIST’s generative AI profile warns that organizations may need different levels of oversight and additional human review, tracking and documentation depending on the risks. In other words, ethics should be based on the consequences of mistakes, not just how new the tool is.

The first red line: when AI becomes a substitute for moral judgment

A clear sign that AI has gone too far is when it stops helping people make decisions and starts replacing them in areas where human values are essential. Hiring, healthcare, education, policing, welfare screening, and criminal justice are about more than just patterns. They require dignity, context, explanations, and the chance to challenge decisions.

UNESCO’s framework often stresses the importance of human oversight including how much there is and what happens when people override the system.

This is not just an abstract ideas. This is a way to protect the space where human responsibility is still needed.

The main question is not whether AI works well most of the time.

It is whether the cost of a mistake is something society should let a machine handle. If a recommendation algorithm picks the wrong song, the harm is minor. But if a sentencing tool, admissions screen, or diagnostic system quietly leads someone to a harmful decision, the harm is much more serious.

In these cases, the issue is not just accuracy—it is about handing over moral responsibility. When institutions start hiding behind automated results, accountability starts to fade.

A recent open-access review of AI bias explains this, saying that AI-related biases can cause injustice, harm, loss of autonomy, and erosion of accountability. That last phrase may be one of the most important in the ethics debate.

Bias is not a bug that can always be patched away

In technology, there is often a tendency to treat ethics like a technical task list. If a system is biased, people try to clean the data, adjust the model, add a fairness check, and move on.

This work is important, but it does not fix everything. A well-known 2023 survey on fairness and bias in AI calls the problem complex and multifaceted, involving not just technical issues but also the social conditions where data comes from and is used.

The review points out that sources of bias, their effects, and the ways to fix them all interact, making fairness a moving target instead of a final goal.

This is important because many harmful systems are not biased just because the data is flawed. They are biased because the world that created the data is unequal, exclusive, or unfair.

If old hiring records favored certain candidates, the AI can learn that bias. If medical access was unequal, prediction systems can pick up that inequality. If law enforcement data shows over-policing, AI can repeat those patterns while seeming objective.

So when should we say enough?

One answer is when a system’s supposed neutrality is used to justify an unfair reality instead of questioning it. If AI is used in areas with known structural inequalities, and its results are accepted as final without real ways to challenge or review them, the ethical problem is bigger than the model itself.

At that point, the system is not just helping institutions—it is passing their biases through code.

Transparency is necessary, but it is not enough

There are many calls for transparent AI, and for good reason. If people are affected by automated decisions, they should know these decisions are happening and have a way to challenge them.

UNESCO’s ethical framework puts transparency at the center and stress that AI governance should support human rights and dignity. But transparency alone can be misleading if it becomes just a box to check instead of a real way to ensure accountability.

A company can share its principles and still create manipulative systems. A model card can describe a tool but still not make it easy for regular users to challenge it. A dashboard can show confidence scores but still hide the real social impact of mistakes.

Transparency is powerful when it gives people explanations, options for appeal, and influence. It is weak when it only makes institutions look responsible.

NIST’s AI RMF is better here because it connects trust to governance by mapping, measuring, and managing risks in context.

This framework suggests something important: explanations only matter if they lead to real changes in how an organization acts.

So “enough” starts when transparency is just for show. If a system is too complex, too unclear, or changes too quickly to be explained to the people it affects, then it should not be used in high-stakes situations.

In some areas, a partial explanation is not enough. If someone loses a benefit, a job, parole, or a medical chance, saying “the model flagged you” is not a real answer.

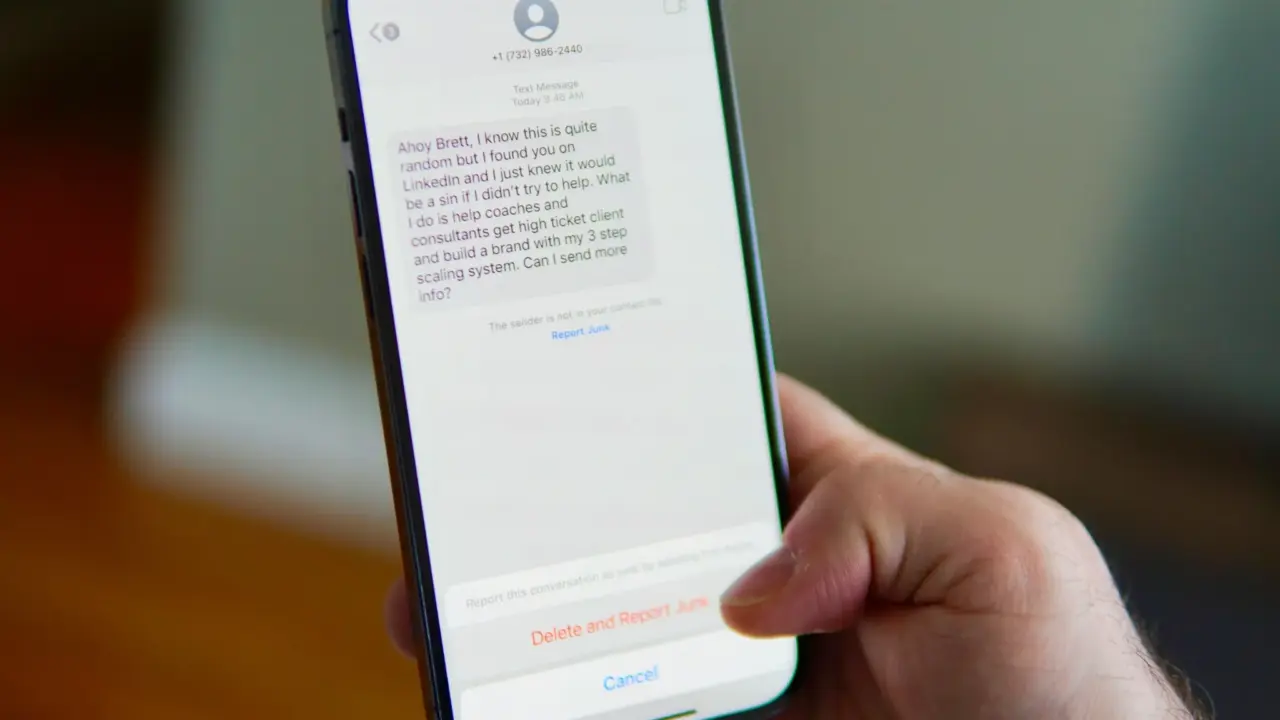

The second red line: when AI manipulates rather than assists

AI can also cross a line by shaping human behavior in ways that are hard to see. Recommendation systems, generative assistants, adaptive interfaces, and algorithmic feeds can all guide attention and decisions without seeming forceful.

This influence becomes a serious ethical issue when systems are designed to take advantage of emotional vulnerability, encourage compulsive use, or persuade people unfairly.

UNESCO warns that AI can “embed biases,” “threaten human rights,” and increase inequalities. These concerns are not just for public-sector uses—they also apply to commercial systems that quietly guide users on a large scale.

This is one reason why ethical AI is about more than just safety tests. A model can be technically safe—it does not crash, leak data, or produce illegal content—but still harm people in how it interacts with them.

If a system is designed to maximize attention by taking advantage of uncertainty, outrage, loneliness, or dependence, then the ethical problem is in its goals, not just its design. NIST’s generative AI profile says organizations may need different mixes of human and AI involvement depending on risk.

This should include cases where the risk is not a big failure, but slow, ongoing manipulation.

So when should we say enough in consumer AI? When personalization turns into controlling behavior without real consent. When users are nudged, profiled, or emotionally influenced in ways they cannot easily notice or refuse.

When the machine stops helping people do what they want and instead learns how to keep them doing what benefits the platform.

The third red line: when AI displaces responsibility

One of the most dangerous myths in AI governance is that oversight just means putting a human in the process. In reality, this kind of oversight can be very weak.

If the person in charge has no time, no authority, no technical knowledge, or is pressured to follow the system’s output, then “human oversight” is just a formality, not real protection.

NIST’s playbook goes further by urging organizations to record how much oversight there is and keep track of overrides. This is valuable because it treats oversight as something you can measure, not just claim.

The bigger issue is responsibility. Ethical AI goes too far when its use lets an institution avoid taking responsibility for what happens. Saying “the AI recommended it” is not a real excuse if a hospital, school, bank, employer, or public agency made the final decision.

Accountability cannot be handed off to a model any more than to a spreadsheet. The more important the system, the more we need to know who can stop it, who can override it, and who is responsible if it causes harm.

UNESCO’s framework and NIST’s RMF both support this idea, even if they use different words. The main point is that AI must stay under the control of people who can be held accountable.

Ethical AI also has environmental and cultural limits

Too often, discussions about AI ethics focus only on fairness and transparency and ignore other limits. UNESCO specifically includes environmental concerns, warning that AI can harm the climate.

This is an important reminder because large AI systems are not just virtual—they use energy, water, hardware, and computing resources. Ethical use is not just about what a model does or decides. It is also about whether the social value of the application is worth its material cost.

There are also cultural limits. UNESCO’s later work on AI and culture shows concern that AI systems can change creative practices, language, and how knowledge is shared. If a tool makes expression too standard, silences minority voices, or favors dominant data and languages, the harm may not look like a typical safety problem.

It may look like slow erasure. Ethical AI should protect the right to keep differences, context, and human meaning that cannot be optimized. Sometimes, saying enough means refusing to automate judgment, expression, or cultural interpretation that lose value when turned into predictions.

So where should society draw the line?

A good rule is that the more a system affects rights, dignity, health, livelihood, or democracy, the less society should accept secrecy, weak options for appeal, or poor oversight.

Low-risk uses can allow more testing. High-stakes uses should have stricter limits, required audits, explainability rules, and sometimes even bans.

NIST’s frameworks are helpful because they see AI risk as depending on context, not as the same everywhere.

UNESCO is helpful because it insists that human dignity and rights are always the main concern.

Together, they show that ethical AI is not about seeing how far machines can go, but about deciding where to draw the line.

This means society should be ready to say enough in at least four cases: when AI replaces human judgment in sensitive areas without real options for appeal; when it repeats structural injustice while pretending to be neutral; when it manipulates people more than it helps them; and when institutions use it to avoid responsibility for harm. These are not rare situations—they are becoming more common.

The point of ethics is not to make AI infinite

The biggest misunderstanding in this debate is thinking that ethics is meant to make AI acceptable everywhere. It is not. Ethics is also about deciding where AI should stop. A society that takes ethics seriously will not just ask how to make systems safer.

It will ask which decisions must stay human, which risks are too great even if they are profitable, and which efficiencies are not worth the moral cost. UNESCO’s rights-based approach and NIST’s risk-based approach both offer helpful tools, but tools are not the same as judgment. That last step is up to us.

So the real test of ethical AI is not about how far we can extend its use. It is about whether we can still see a boundary when we reach it.

The important question is no longer “Can AI do this?” but “Should we allow it?”

Sometimes, the most ethical answer a society can give is the simplest: no, not here, not this way, not any further.