Artificial intelligence doesn’t fail because models are weak. It fails because systems never make it to production.

That’s the reality for Andrei Shcherbinin, who works at the intersection of research, engineering, and product—where machine learning is judged not by benchmarks, but by impact.

In this TechGrid interview, Shcherbinin breaks down what separates real-world ML from experimentation, why many AI projects never reach users, and why execution—not models—is now the real competitive advantage.

How would you describe your current role and scope as an ML leader, and what kinds of systems are you responsible for today?

Andrei Shcherbinin:

I’m currently the Team Lead of an ML team in a product company focused on dating platforms. My role goes beyond building models — I oversee the full lifecycle of ML solutions: from identifying business use cases, working with stakeholders, and choosing the right approach to defining the architecture, ensuring continuous development, launching into production, monitoring performance, and improving systems after release.

My scope is fairly broad. It includes recommender systems, content automoderation, retention and marketing ML, monetization and traffic attribution models, as well as parts of MLOps and the infrastructure around ML. In practice, I’m responsible both for making sure the team delivers new ML features and for ensuring that the systems already in production remain stable, measurable, and genuinely valuable for the business.

A large part of my work also sits at the intersection of multiple functions. I need to align research, backend, analytics, product, and platform teams so that ML does not remain just a model in a notebook, but becomes something that truly works inside the product and delivers visible business impact.

What distinguishes a production-ready ML system from a model that only performs well in demos or offline experiments?

Andrei Shcherbinin:

The key difference is that a production-ready system solves a real problem in a live product, rather than simply showing strong offline metrics on a test dataset.

Offline, it is possible to achieve attractive numbers such as ROC AUC, accuracy, or precision. But once a model enters production, a different set of questions immediately appears: does it perform reliably under load, does it meet latency requirements, how good is the input data, is there proper monitoring in place, how does the system behave in edge cases, can it be updated safely, do we understand how it interacts with existing business rules and upstream filters, and most importantly, does it create measurable business impact?

For me, a production-ready ML system has a clear business objective, a stable data pipeline, quality control, model and infrastructure monitoring, a clear fallback strategy, A/B validation on real users, and an ongoing support process after launch. Without those elements, it is more of a strong prototype or MVP than a true production system.

Why do so many AI and ML projects fail before they reach real users, and what have you learned from addressing those challenges in practice?

Andrei Shcherbinin:

A common reason is that teams start with the model rather than the problem. They can spend a long time improving quality on historical data, only to realize later that the solution does not fit well into the product, is too expensive, too slow, or too difficult to measure properly.

Another major reason is underestimating production complexity. Many things look simple before launch: data quality, integrations, logging, online inference, monitoring, A/B setup, coordination with adjacent teams. In practice, those often require more effort than the model itself.

From experience, I’ve learned a few key lessons. First, the team needs to agree very early on what business outcome defines success. Second, you need to design not only the model, but the entire system around it. Third, experiment design, measurable success metrics, and post-launch analysis need to be treated with the same rigor as service development. Fourth, it is usually better to launch a simpler but reliable solution than to spend too long building the perfect model that never reaches production. And finally, without observability and clear metrics, even a good ML system quickly turns into a black box that nobody trusts.

Can you share an example of an ML system or AI feature you helped take from idea to production, and what impact it created?

Andrei Shcherbinin:

One strong example is the development of recommender systems in a dating product. We were not simply improving ranking as a research task — we were building a production system under clear business constraints: business rules, latency, stability, coverage, A/B evaluation, and downstream business impact.

In one recommender system improvement, we achieved a notable business effect in an A/B test: around +12% in ARPPU and approximately +10% in ARPU. It was a strong example of how an ML feature can affect not only engagement, but also revenue.

Another example is automoderation. In that area, success depends not only on having a strong model, but on the full chain: rules, an ML classifier, an LLM or judge layer, a decision layer, human review, and a feedback loop. In these kinds of tasks, we focused heavily on the production side — how to make the system not only accurate, but also manageable, interpretable, and suitable for a real moderation workflow.

How do you balance model quality, latency, infrastructure cost, and user experience when building AI-powered products?

Andrei Shcherbinin:

I see this as an optimization problem rather than an attempt to maximize a single metric. In a real product, you cannot endlessly improve model quality if that comes at the expense of user experience or significantly higher costs.

I usually start from the product context: how latency-sensitive the use case is, what minimum level of accuracy already creates business value, how much the business is willing to pay for inference, and what the effect of model errors is on the user.

For example, in real-time recommender systems, latency is already part of product quality. If a model is slightly better offline but slow enough to damage the user experience, that is a poor trade-off. That is why we evaluate the system as a whole: model quality, RPS, latency, success rate, coverage, infrastructure cost, and downstream business metrics.

In practice, the balance usually comes from keeping the architecture reasonably simple, using fallback strategies, applying different model tiers for different scenarios, maintaining strict monitoring, and validating real impact through A/B testing rather than relying only on offline metrics.

What does effective leadership look like in an ML team today?

Andrei Shcherbinin:

To me, effective leadership in an ML team means being able to connect several worlds at once: research, engineering, data, product, and business. It is not enough to understand models. You need to make decisions under constraints and ensure that the team delivers outcomes, not just good ideas.

A strong ML leader today helps the team stay focused on what truly matters, knows how to translate business problems into technical formulations, prevents research from drifting away from production reality, and at the same time does not sacrifice engineering quality for speed.

Transparency is also essential. The team needs to understand why we are building a given feature, what success looks like, what the risks are, and why we are choosing a particular trade-off. An important part of leadership is discussing decisions openly so the team understands their impact.

And just as importantly, there is ownership. Responsibility includes everything that happens before and after launch.

How do you evaluate whether an ML feature is truly valuable in production?

Andrei Shcherbinin:

I always separate offline quality from production value. Offline metrics matter, but they are not the same as product value.

To understand whether a feature is truly useful in production, I look at several levels: system reliability, model performance over time, real user metrics like CTR and retention, and business impact such as revenue or cost reduction.

If an offline metric improves but nothing changes in an A/B test — or worse, user experience declines — then there is no real value. The key criterion is always system behavior on live traffic.

What areas of applied machine learning do you consider your strongest expertise today?

Andrei Shcherbinin:

My strongest area is applied ML at the intersection of models, product, and production. Not just training a model, but turning a solution into a working system with measurable impact.

That includes recommender systems, retention and marketing ML, content moderation, and production ML systems with MLOps.

What practical lessons have influenced your approach the most?

Andrei Shcherbinin:

A simple solution that actually reaches production is almost always better than a more sophisticated one that never gets beyond research.

Data quality and integration often matter more than small model improvements.

Without monitoring, a system is not complete.

And systems must be designed for failure. Production ML is about resilience, not just accuracy.

What do experienced ML practitioners still underestimate today?

Andrei Shcherbinin:

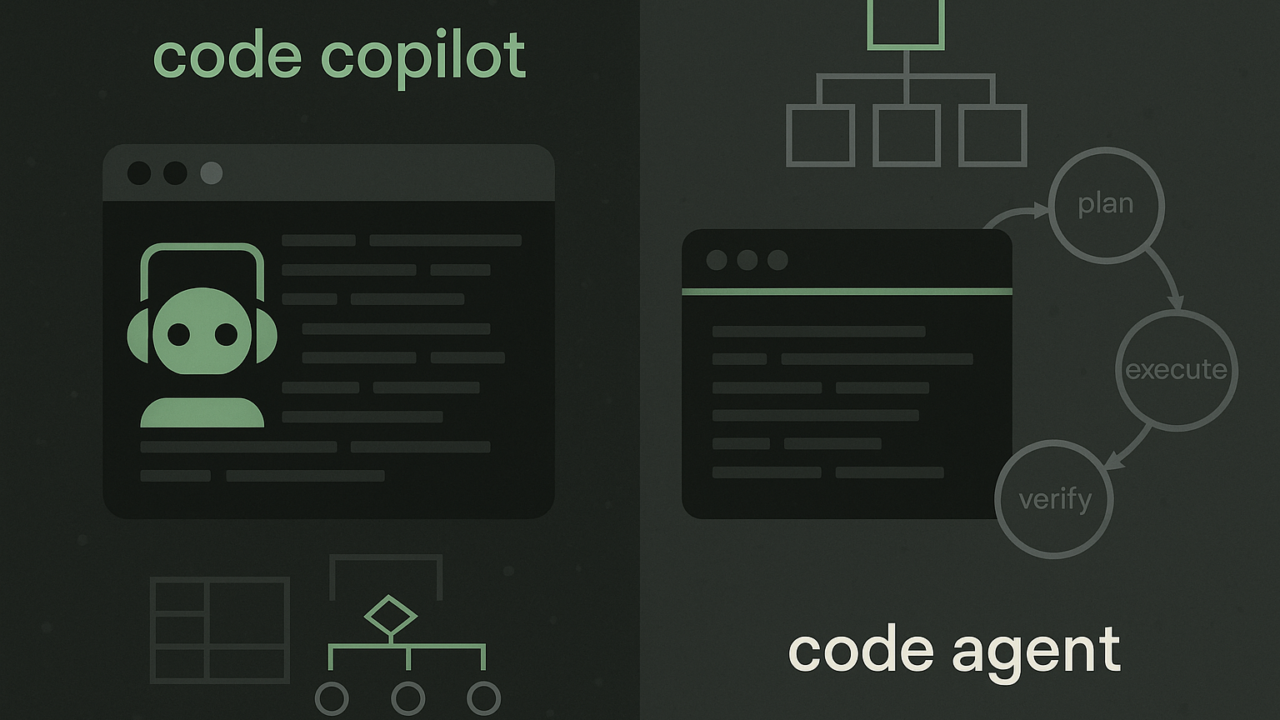

Many teams still underestimate that ML by itself is rarely an advantage. The advantage comes from how quickly you can turn models into working product solutions.

Experienced practitioners need to understand not only models, but also how decisions are made around them: use cases, economic impact, and how ML affects user behavior.

Another underestimated factor is focus. Strong teams are not the ones trying everything — they are the ones that know where ML actually creates value.

And as models become more accessible, competitive advantage is shifting toward execution quality: data, infrastructure, experimentation speed, product quality, and team maturity.

What This Conversation Shows About Modern Machine Learning

What this conversation shows is that machine learning today is less about building better models and more about making them work in real systems.

Success no longer depends on algorithms alone, but on execution—data quality, infrastructure, monitoring, and the ability to deliver measurable business impact.

As models become more accessible, the real advantage shifts to teams that can ship faster, integrate better, and prove value in production.

In short, ML is no longer just a research problem. It’s an execution problem.