James Markunas has a simple test for whether an AI program is actually working: are releases getting cleaner, are customers getting value faster, and are executives making decisions sooner? If the answer is no, he says, you don’t have an AI success story. You have a more expensive version of the same dysfunction.

Markunas has spent fifteen years inside the kind of enterprise programs most leaders prefer to describe from a safe distance, broken delivery at DIRECTV, platform modernization at BCG, commerce migrations across five countries at Publicis Sapient. He’s watched organizations spend heavily on AI and come out the other side with better-looking dashboards and worse decision-making. He’s also seen it done right.

We asked him about the gap between the two, and he didn’t hold back.

1. You’ve argued that AI often amplifies whatever management quality is already present inside an organization. A lot of companies say AI is improving productivity, but what are they often mistaking for progress?

James Markunas:

When companies say, “AI is improving productivity,” I always ask, “Productivity toward what?”

A lot of enterprises, especially ones fresh off a digital transformation, see more documents, more dashboards, more tickets, more meeting summaries, and more automated follow-ups, and they call that productivity. AI is just helping them produce more clutter, not better outcomes.

AI is extremely useful. It can compress research, speed up analysis, surface risks, draft requirements, summarize decisions, and help teams move faster with more context. But if your organization already has unclear ownership, weak prioritization, bloated approval paths, or no real operational discipline, AI won’t fix that. It amplifies it.

I’ve seen these issues across digital transformation, commerce, regulated industries, and large platform programs. The amount of content is rarely the problem. The problem is decision quality. Is your organization really more productive if nobody knows who owns decisions, how those decisions affect the P&L, or which tradeoffs the business is actually making?

Are releases getting cleaner? Are customers getting value quicker? Are compliance cycles shorter? Are handoffs clearer? Are executives making decisions sooner? Are teams spending less time reconciling conflicting information?

If the answer is no, AI hasn’t improved productivity. It’s increased output volume.

2. Drawing on the patterns you’ve observed in AI-enabled teams, why do poorly managed companies tend to become even more chaotic when AI is introduced at speed?

James Markunas:

AI rewards operational discipline and punishes companies that try to use it as a substitute.

Chaotic companies get more chaotic with AI because automation doesn’t create discipline. It multiplies whatever’s already there. AI becomes rocket fuel for the existing mess: meeting notes with no continuity, hundreds of tickets that don’t tie to a real initiative, AI-generated requirements nobody challenged or read, and constant firefighting while nobody moves the revenue needle forward.

Before AI, bad requirements had to survive a human conversation.

If a product leader wanted something risky, like replacing checkout with an embedded iFrame, someone had to sit down with the solution architect and technical lead and argue through it. I saw versions of that problem on Boehringer Ingelheim and Modere. The argument was the point. It forced architecture, security, customer experience, and product into the same room before a bad idea became production code.

In both cases, we launched real checkout solutions built with sustainable and scalable code because the team worked through the problem instead of blindly accepting the shortcut.

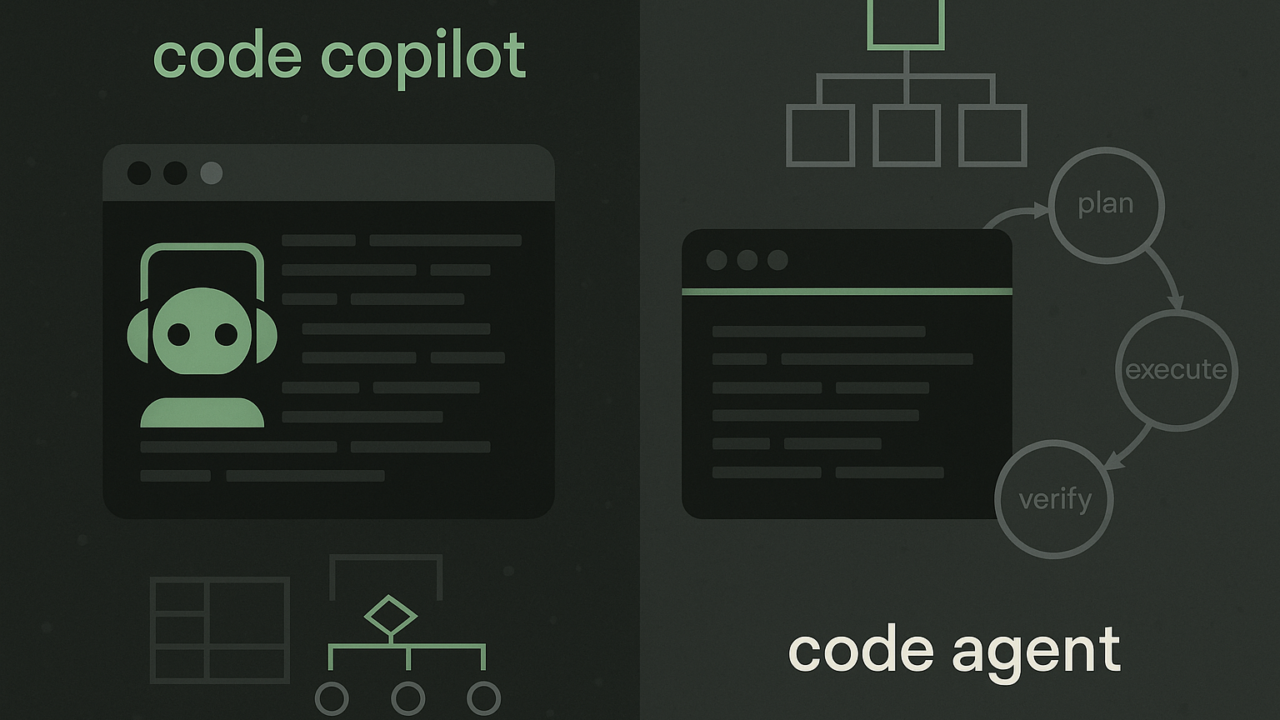

AI can let bad requirements skip the argument that should have killed them. Picture an engineer telling Claude Code, “Replace checkout with an iFrame,” because someone captured it in a meeting note and nobody pushed back. Goodbye, eCommerce business.

I love AI when it works. Take American Apparel as the opposite example. Our scrappy, tight-knit engineering team would’ve benefited from AI because we had operating discipline. We had tight intake, tight scoping, useful documentation, and a development process that had to be sharp because we were working inside a brittle, spaghetti-like Oracle commerce system.

In that kind of environment, AI would’ve had something useful to accelerate. It could have analyzed inventory logic, sped up scoping, surfaced code dependencies, and maybe helped unwind parts of the spaghetti code engine formerly known as Oracle ATG. But it would have worked because the humans knew what to look for and how to articulate the solution.

It is like that old saying: “Wherever you go, there you are.”

Now it’s: “Wherever you go, AI pours gasoline on it.”

3. From your perspective, what happens when executives use AI to demand faster execution without fixing unclear priorities, bad incentives, or decision bottlenecks?

James Markunas:

When executives use AI to demand faster execution without fixing processes, they don’t create speed. They create more efficient delusions.

AI makes work seem cheaper, so executives start acting as if the organization has infinite capacity. But ROI doesn’t come from generating more dashboards, automations, decks, or tickets. ROI comes from work that increases revenue, protects margin, lowers operating cost, improves customer experience, or removes real business risk.

UPS got it right with ORION because they pointed the machine at real economic wounds: miles driven, fuel burned, maintenance, emissions, and cost. Not “better collaboration.” Not “more efficient ideation.” Miles. Fuel. Maintenance. Emissions. Cost. Full ORION deployment was expected to cut 100 million miles a year, save 10 million gallons of fuel, reduce CO₂ emissions by 100,000 metric tons, and deliver more than $300 million in annual savings. That is not innovation theater. That is a machine gun pointed directly at the P&L.

I led the launch of DIRECTV’s digital revenue platform to put offers, premiums, and add-ons in front of customers through the set-top box. The product launched. It worked. But it also exposed the bigger problem: the business didn’t just need more campaign execution. It needed smarter offer decisioning.

DTV had the raw ingredients: customer behavior, billing history, package data, churn signals, promotions, eligibility rules, and the kind of Salesforce, Snowflake, and Tableau stack that should have made the revenue engine smarter. But the operating structure was full of silos, dependencies, approvals, and manual coordination; every move depended on six other teams, several approvals, and a prayer.

AI would have changed the math if it were pointed at the right problem. Instead of dragging campaigns through a bureaucratic maze, it could’ve used real-time data to spot churn risk, choose the offer most likely to protect revenue, and surface the highest-value upsell at the right moment.

The real win was never launch theater. It was using context and automation to put the right offer in front of the right customer fast enough to reduce churn and grow revenue at the same time.

Without clarity, ownership, and measurable goals, AI becomes a force multiplier for dysfunction.

4. You’ve spoken about how polished AI-generated outputs can hide weak thinking. Why can AI make a bad strategy look more convincing than it really is?

James Markunas:

AI always sounds confident, even when it’s lying to you. And it sounds confident even when it’s wrong. That’s dangerous because confidence and judgment aren’t the same thing.

A weak strategy used to immediately jump out: it had gaps, contradictions, missing assumptions, vague language, etc. Now, AI can take that same weak thinking and wrap it in a polished structure: executive summary, strategic pillars, implementation roadmap, RAID log, KPI framework, risk register, and next steps.

That polish creates false confidence.

To illustrate, I used one serious example and one ridiculous example. In both cases, ChatGPT was happy to give me several pages of confident-sounding content.

Example 1: Let’s plan a major enterprise digital transformation

Example 2: Let’s achieve what only one man in the last 2,000 years has achieved.

In the project-planning example, the RAID log looked great at first glance. Could I hand it to product & engineering tomorrow and have everyone know exactly what to do? Not really. The ChatGPT RAID log didn’t name any owners, blockers, impacted systems, or business consequences. It looked strategic, but it was still just a polished placeholder.

In the “achieving enlightenment” example, ChatGPT gave a confident step-by-step process to achieve Nirvana. Yet I haven’t seen an influx of new Buddhas on the news lately, so I assume this is AI overconfidence.

I am a 90s kid. We had to go to the library and find multiple primary sources when we wrote a term paper. The same rigor should apply when using AI as a work tool. Aum!

5. What role does middle management play in whether AI improves a company or turns into another layer of organizational noise?

James Markunas:

Bad middle management measures AI by activity: training completions, more summaries, more dashboards, more “look how modern we are” nonsense.

Good middle management measures AI by whether the work got better.

I knew a manager who loved redundancy but never delivered any value. He’d bring several AI notetakers into meetings, then dump the notes into ClickUp, creating a flood of redundant and non-actionable garbage. He also didn’t want vendors or clients seeing internal tickets, so he created exact copies of every ticket on an external-facing board. But the external tickets weren’t associated with the internal tickets, so they were useless as a delivery system.

On top of that, he loved nested Slack chains and infinite Slack channels with a strange mix of internal and external stakeholders. That became yet another funnel into the ticketing system.

At the end of every month, he’d ask, “How many hours did we spend on X?” or “Where are we on initiative Y?”

The honest answer was always: “I don’t know.”

We had every AI tool on the planet, a cornucopia of data, and extremely thorough meeting notes. Yet the system didn’t produce better delivery control or move ROI toward the black.

Microsoft’s internal AI skilling push is a great example of middle management getting AI right. They’re not treating AI training as one generic sermon for the whole company; they’re focusing on how AI applies to the way individual employees actually work. Their internal version of Co-Pilot learns each individual team and employee, and then creates a bespoke learning track for that employee. That’s getting AI right in a way that connects the tooling to the job context.

That’s the middle manager’s real job: translate hype into work.

Don’t tell a team to “use AI more.” Tell sales how it helps qualify accounts, sharpen messaging, and handle objections. Tell finance how it speeds up variance analysis and makes explanations cleaner. Tell marketing where it helps with research and drafting, and where human judgment still has to win.

6. Based on your work examining how AI changes operating behavior, how do internal politics shape AI adoption more than most companies are willing to admit?

James Markunas:

AI adoption is political because it shifts power dynamics: who has access to information, who can produce analysis, who can challenge experts, and who can automate work. That makes people nervous.

In the last few years, enterprise leadership has swung from irrational fear to irrational fantasy. First, companies were afraid employees would leak proprietary information or misuse AI tools. Then the conversation shifted to replacing workers and cutting costs. That whiplash tells you this is both a technology and a power conversation.

A few specific myths shape adoption, perception, and corporate politics:

- AI is going to steal all of our IP.

- AI is going to replace entry-level workers and middle management.

- AI is going to be an out-of-the-box, turnkey solution that instantly fixes broken business processes.

- Anyone with a computer is qualified to use it well.

Each myth creates a different political response. Legal and security teams may block usage. Executives may overpromise savings. Managers may use AI adoption to signal relevance. Employees may hide usage because they’re afraid of being judged or replaced.

The practical question is much less dramatic: What business outcome are we trying to improve, and can AI help improve it safely, measurably, and repeatably?

If the company can’t answer that, adoption becomes fiction. If it can, AI becomes part of the operating model.

7. Why do some organizations use AI to improve judgment, while others use it mainly to justify headcount pressure and short-term cost cutting?

James Markunas:

That’s a human nature question, not an AI question.

AI doesn’t decide whether a company is run by builders or extractors. It just gives both groups a sharper tool.

Some executives look at AI and see a cleaner story for layoffs. Cut headcount, lower expenses, improve margins, tell the market the company is becoming “AI-native,” and watch the stock react. That’s much easier than doing the harder work of building better products, opening new revenue streams, fixing broken operating models, or creating actual customer value.

That’s the extraction. It treats the workforce as a cost center to be compressed, not an intelligence network to be upgraded. It pumps value out of the company instead of building value. It’s the Silver Surfer/Galactus model: consume the planet, harvest the energy, move on.

Other leaders look at AI and ask: How do we help people make better decisions? Where are customers getting stuck? Where are we wasting human judgment on work that a machine can safely compress?

That’s what a builder sounds like. They treat employees as the company’s operating intelligence. AI helps them see patterns sooner, pressure-test decisions, remove low-value work, and spend more time on the judgment calls that actually matter.

The split isn’t about AI maturity. It’s about management philosophy.

Some leaders use AI to create value. Others use it to justify taking value out.

8. In your view, can AI actually improve accountability inside companies, or does it more often blur responsibility by making decisions feel more objective than they are?

James Markunas:

AI doesn’t natively improve accountability. It turns it into a shell game where nobody owns the bag.

By default, AI blurs responsibility. The machine produces a clean, authoritative-looking recommendation, and suddenly people start treating it like gospel carved in silicon. Who prompted it? Who fed it the data? Who signed off on the parameters? Who owns the result if it’s wrong?

Nobody asks because the output looks objective.

That’s how executives end up hiding behind “the AI said,” as if it were an impartial oracle instead of a probabilistic system shaped by human choices, available data, and business assumptions.

Datadog’s autonomous agent is an elegant example of how to build an AI product that supports accountability instead of pretending to replace it. It crawls your infrastructure, investigates incidents, and helps surface root-cause recommendations faster. That’s valuable. But the root-cause analysis still lands on a human, or a group of humans, who decide what to fix.

AI can help accountability if you build it into the system: explicit ownership, human sign-off, audit trails, visible assumptions, clear data boundaries, and named decision-makers.

The trick is not to let AI blur the line between data-driven decision-making and responsibility laundering.

9. What do companies get wrong when they assume that automating more work automatically creates a stronger business?

James Markunas:

The best engineers I ever worked with were two guys named Nick, who we lovingly referred to as “The Nicks.” We were working together on a high-profile system build for New York Life. We were pre-launch and working off a staging environment, yet the client wanted three daily emails: one to notify them about upcoming deployments, one to notify them when the deployment started, and one to notify them that the deployment had successfully finished.

Mind you, the deployments were automated and set to happen every morning at 6AM.

I was a lead on the product team, and I shouldn’t be admitting this in writing, but I’m not a morning person. They call 6AM an ungodly hour for a reason.

When my Vice President of Product assigned me the task of sending those three daily emails, my first instinct was to figure out how to automate them so I could sleep until 8:30AM, a reasonable waking hour. I scheduled an urgent meeting with The Nicks to discuss the tooling. They suggested using Power BI to automate the emails based on our GitHub pipeline actions. It took us 15 minutes to build, and I was able to continue getting my beauty sleep.

The automation worked.

But the real question was: why were we sending those emails in the first place?

The Nicks and I built a useful automation for a redundant, low-value task that added no meaningful value to the product or program. That is the trap with automation.

Companies often ask, “Can we automate this?” when the better question is, “Should this work exist at all?”

Automation is powerful when it removes friction from work that matters. It’s not valuable when it preserves a bad process, accelerates a pointless workflow, or gives executives the feeling of progress without actually improving the business.

Automation is great. But it has to connect to operational processes and business value. Automating pointless tasks doesn’t make them less pointless. If your business is not poised to scale, AI won’t scale you, despite what Dan Martell says.