Microsoft, Google, and Elon Musk’s xAI have agreed to let the U.S. government see new artificial intelligence models before they are released to the public.

This gives federal officials a new way to review these systems for security risks as concerns grow about how advanced AI could be misused.

On May 5, the deal allows the Commerce Department’s Center for AI Standards and Innovation, or CAISI, to check for national security risks.

This move puts some of the world’s leading AI developers into a more formal review process before their models are released, though participation is voluntary.

CAISI says the agreement lets it study the models before they are launched and research their abilities and security risks. This gives the government a chance to examine new systems before they reach the public.

Why the government wants earlier access

This timing shows a bigger change in how Washington views advanced AI.

Reuters said that in recent weeks, there has been growing concern worldwide, including among U.S. officials and businesses, about whether powerful new systems could help hackers. These worries have grown as AI models become better at coding, automation, and problem-solving, which could be used for both defending and attacking in cyberspace.

Chris Fall, who leads CAISI, said these reviews are a technical need, not just a formality. Reuters quoted him saying that “independent, rigorous measurement science” is key to understanding advanced AI and its impact on national security.

This shows the government wants a more organized, evidence-based way to test what these models can do, instead of just trusting company claims or public tests.

A program that is getting bigger

The new arrangement with Microsoft, Google and xAI builds on earlier agreements already in place with OpenAI and Anthropic. Those relationships were established in 2024 under the Biden administration, when CAISI was still known as the U.S. Artificial Intelligence Safety Institute.

The agency said it has already completed more than 40 evaluations, including tests on cutting-edge systems that were not yet available to the public.

CNA said that developers often give CAISI versions of their models with fewer safety features, so the agency can test them more thoroughly for security issues. This is important because it means the government is not just looking at finished products, but also studying how these systems act when protections are lowered or taken away.

A wider national security push

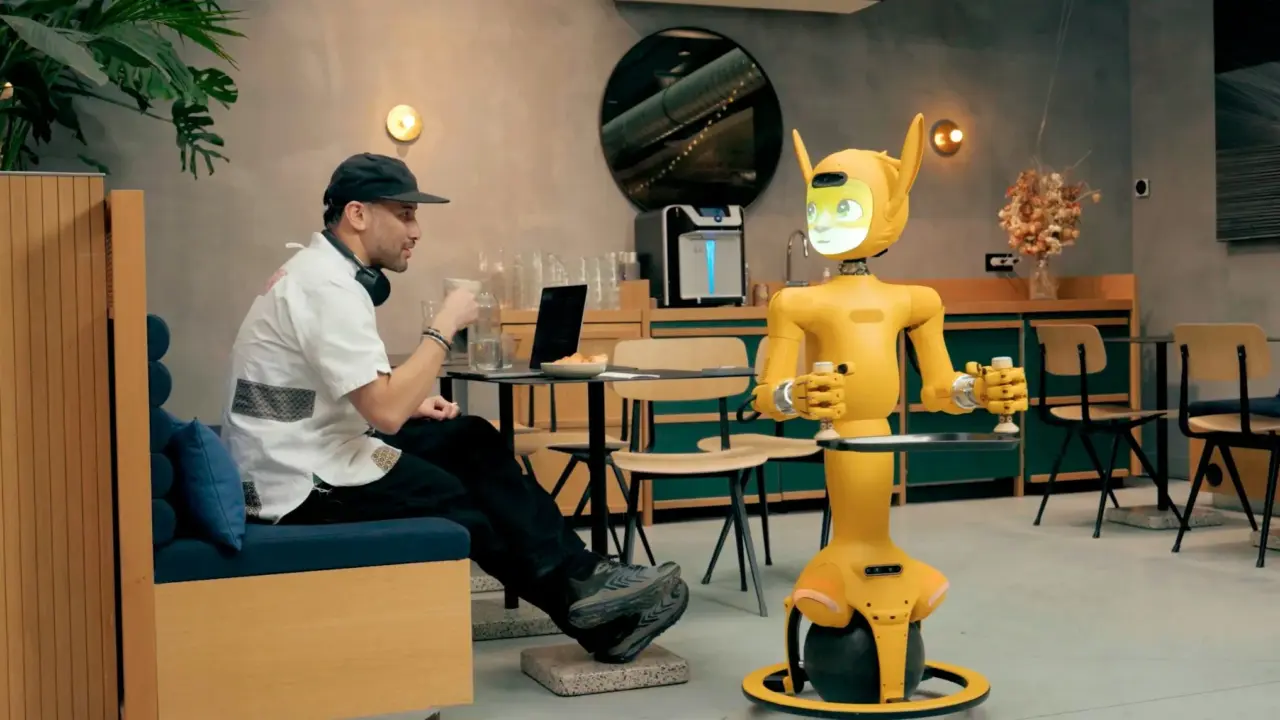

The AI review deal comes just days after another big announcement from the Pentagon. The Defense Department recently made agreements with seven AI companies to use advanced technology on classified military networks, expanding the number of providers working with the military.

Anthropic was not included because it has been in a dispute with the department about safety rules for military use of its AI tools.

Together, these developments show the U.S. government moving on two fronts at once: adoption and scrutiny. One track is about bringing more AI into defense and national security systems. The other is about testing powerful models before release to determine whether they create unacceptable risks.

Microsoft and xAI did not immediately respond to requests for comment, while Google declined to comment.

For now, the most important signal is not what the companies are saying publicly, but that some of the biggest names in AI are now letting Washington look under the hood earlier than before.