For most of computing history, building software was expensive, slow, and difficult. This kept the number of new products and updates low, and most code changes took weeks to reach production.

By 2026, things have changed. AI-assisted coding, better cloud tools, and reusable platforms have made software much easier to create.

GitHub’s Octoverse 2025 says that “AI, agents, and typed languages” are driving the biggest changes in development in over a decade. GitHub also reports that “a new developer joins GitHub every second,” with over 36 million new developers in the past year and nearly 1 billion commits in 2025.

The paradox is that while it is easier to build software at scale, it is now harder to know exactly what software contains, depends on, or might break.

Speed is no longer the hard part

This change matters because more code brings new trust challenges. As writing code gets cheaper, the main issue shifts from building software to making sure it is reliable.

GitHub’s data shows more than just growth; it reveals a new culture where AI is part of daily work and TypeScript’s popularity shows a preference for languages that work well with machines. This is progress, but code that is easier to write is not always easier to check for quality.

Many organizations now find the real question is not “Can we build this?” but “Can we prove this release is safe, traceable, and trustworthy?” Meeting this standard is much harder, since it needs proof about the process, dependencies, and review quality, not just a working demo or passing tests.

AI can produce working code that still deserves suspicion

Research on AI-generated code explains why the trust gap is growing. In Assessing the Quality and Security of AI-Generated Code: A Quantitative Analysis, researchers tested 4,442 Java assignments and found that model outputs could pass functional checks but still have “bugs, security vulnerabilities, and code smells.”

The paper points out serious issues like “hard-coded passwords” and “path traversal vulnerabilities,” and notes there was “no direct correlation” between passing unit tests and overall code quality or security.

Another study, Security Vulnerabilities in AI-Generated Code: A Large-Scale Analysis of Public GitHub Repositories, examined 7,703 AI-generated files and found 4,241 CWE instances across 77 types of vulnerabilities.

Even though 87.9% of the files had no CWE-mapped flaw, the authors still found important differences between languages, with Python showing higher vulnerability rates than JavaScript and TypeScript.

The point is not that AI coding is always careless. It is that “working” and “trustworthy” now mean different things more than ever.

Trust now lives deeper in the stack

This is why trust in software now depends more on where it comes from than how it looks. A smooth interface, fast updates, or easy onboarding still matter to users, but they are not enough to build real confidence.

NIST’s Secure Software Development Framework says secure practices should be used throughout the software life cycle to create “well-secured” software. Its companion document, SP 800-218A, applies this idea to AI model development by adding specific practices for AI. CISA makes a similar point about the supply chain.

Its guide on Securing the Software Supply Chain says organizations need security checks to “protect software” and “produce well-secured products,” and its 2025 SBOM vision says SBOMs “illuminate the software supply chain” so risks can be addressed “early and consistently.” In short, trust now depends less on how software looks and more on whether its creators can explain how it was built.

The visible layer of trust is getting better

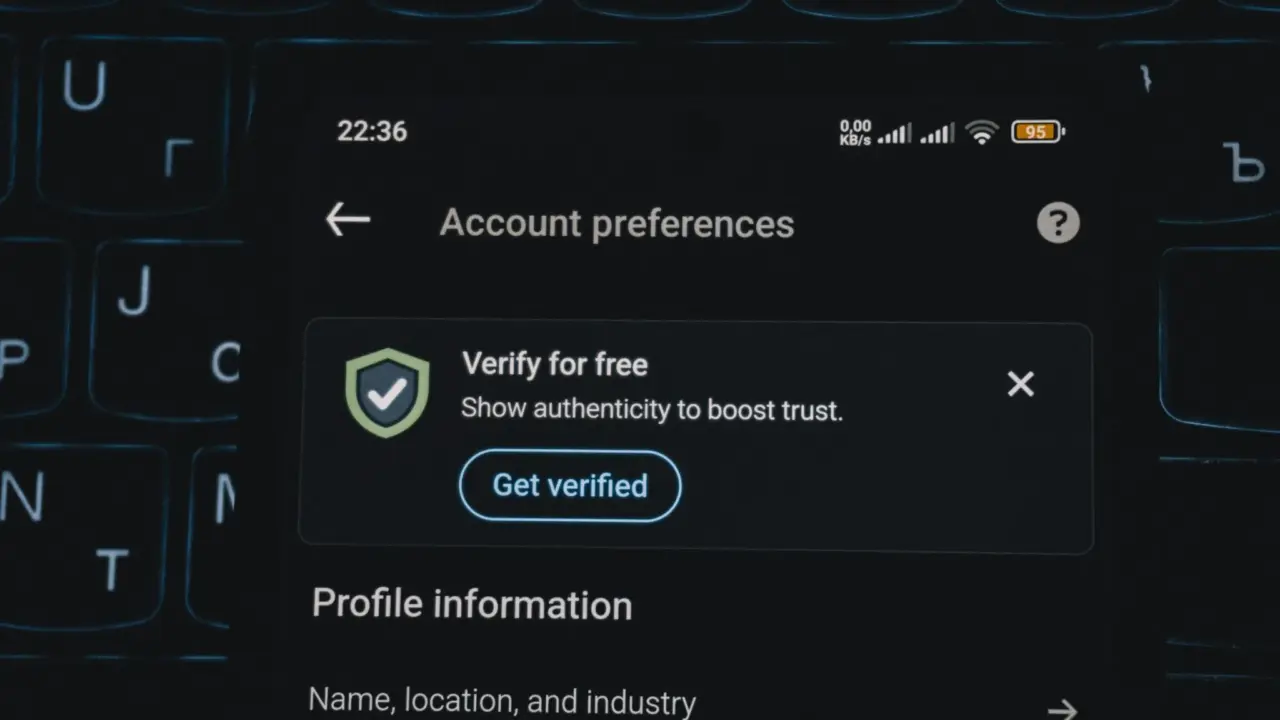

What makes this time interesting is that some parts of software are now easier to trust because they are simpler for users. Authentication is a good example.

FIDO’s Passkey Index 2025 shows passkey sign-ins had a 93% success rate, compared to 63% for other methods. Passkeys also cut sign-in time by 73% and, in some cases, reduced help desk calls about logins by up to 81%. Google’s Passkeys documentation calls passkeys a “safer and easier alternative to passwords.”

This matters because trust is not just technical; it is also about user experience. When users do not get locked out, reset passwords, or struggle with complicated sign-ins, software feels more reliable. So, while the user experience is improving, the systems behind the scenes are getting more complex.

The new problem is proving what users cannot see

This divide is now the main challenge for software trust. Users can spot a better login experience, but they cannot easily see how AI-assisted code is created, what dependencies are included, how the software was built, or if a vendor truly follows secure practices.

That is why trust is becoming more about formal processes and less about gut feeling. It now relies on clear procedures, supply-chain transparency, and strong security engineering.

CISA’s advice that software risks should be handled “early and consistently” shows that modern software is too complex and fast-changing to judge by looks alone.

Research on AI-generated code also shows that convenience can quietly bring in problems just when teams feel most productive. The easier it is to release software, the more trust must be earned with proof that is not obvious in the product itself.

Easier to build, harder to trust

It is true that building software is getting easier. The evidence is clear, and the benefits are real: more developers can build useful tools, AI speeds up routine tasks, and passkeys are solving one of the internet’s oldest problems.

But trust is harder to earn now because speed has outpaced our instincts. The old signs of reliability, like a well-known brand, a polished app, a smooth login, or a feature that seems to work, no longer tell us enough about how the product was made or what risks it might have.

The next stage of software maturity will not be about who can write code the fastest. It will be about who can make fast software understandable, explainable, and defensible.

In this way, the future of trustworthy software may depend less on writing more code and more on proving that the code, models, and parts behind the scenes deserve our trust.